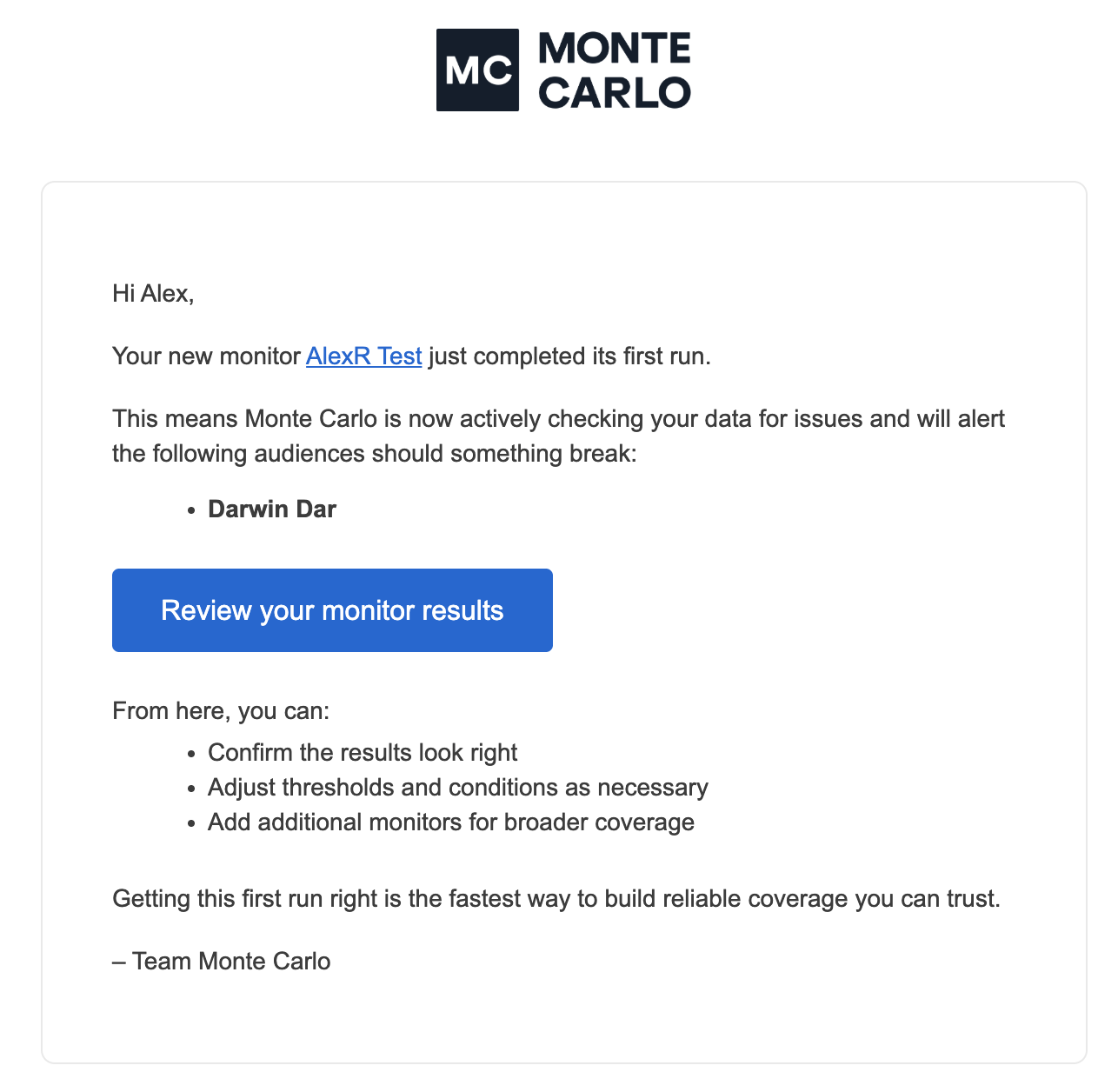

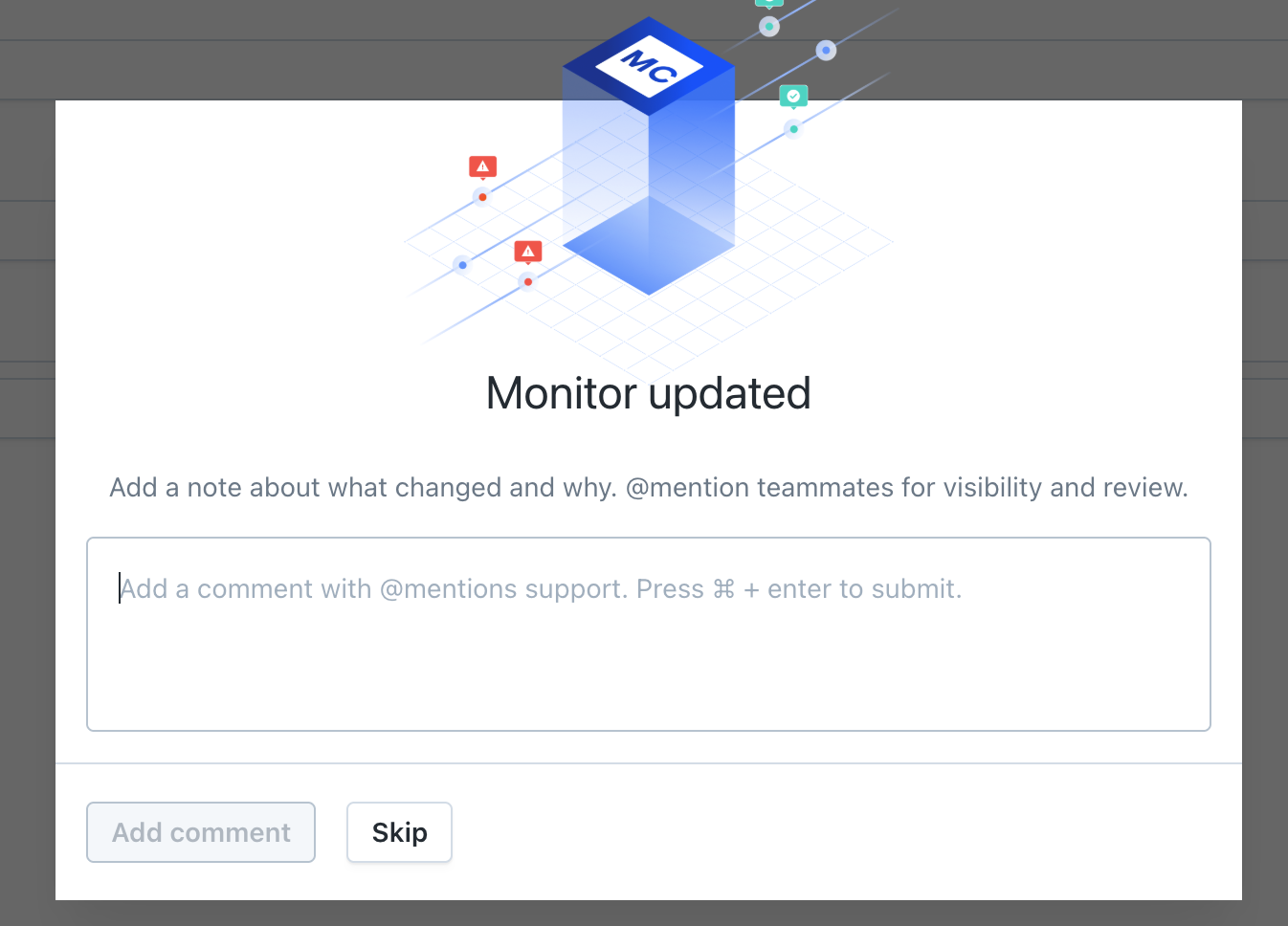

When you create a new monitor, you’ll now receive an email once it completes its first run, so you know whether it’s working as expected.

What to expect:

- ✅ If the first run succeeds, you’ll get a confirmation that it completed successfully, along with a clear path to review results

- ⚠️ If the first run fails, you’ll receive a notification with a link to review and fix the issue

Each email includes a direct link to the monitor details page, where you can: Review the results / Adjust thresholds or settings / Re-run the monitor if needed.

The notification is sent once per monitor (excluding drafts or disabled monitors), and you can unsubscribe at any time.