Freshness Detector

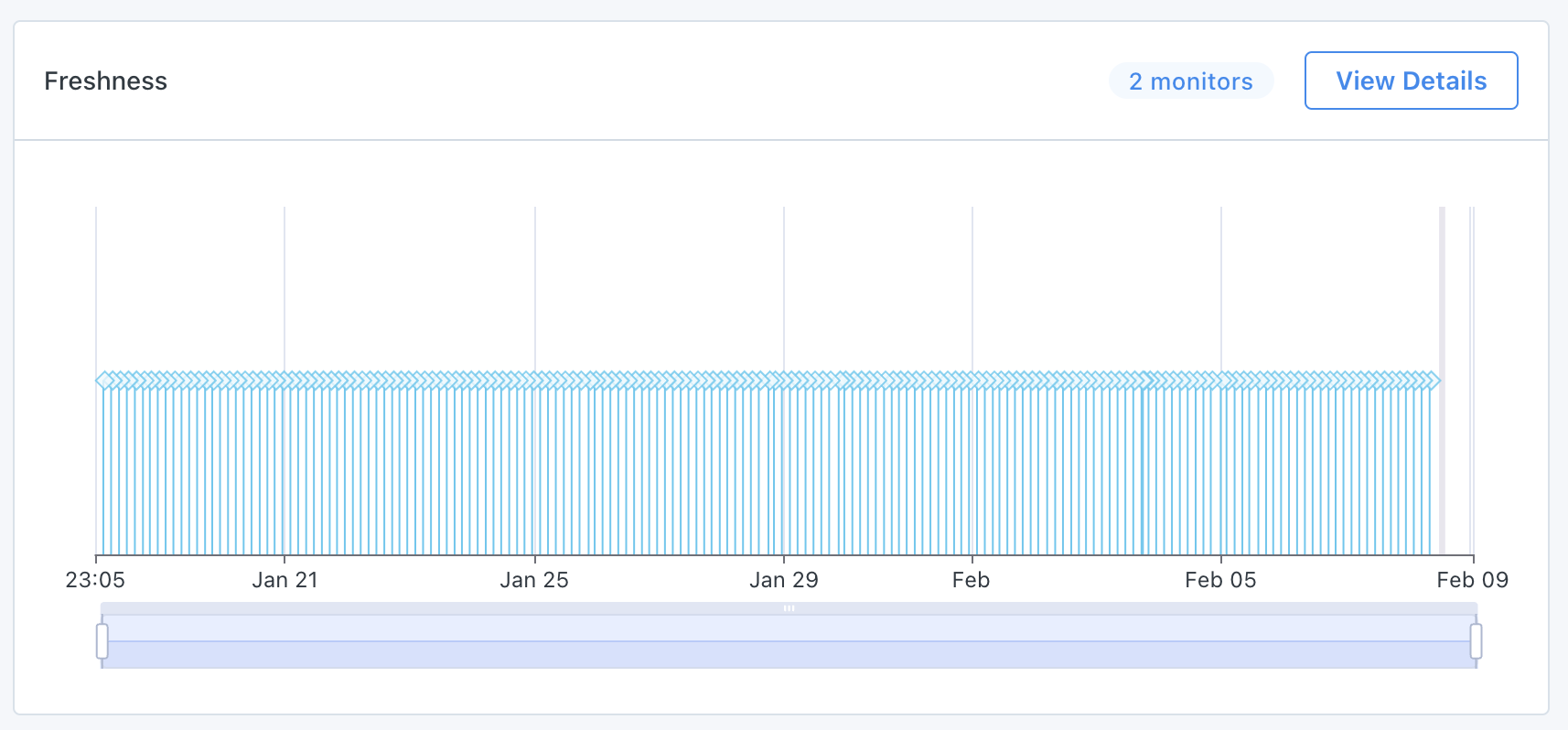

Monte Carlo’s freshness detector looks at update times of your tables coming from the warehouse/lake metadata and the detector alerts you when a table has not been updated recently enough.

Our models take into account a 6 week moving window of training data, remove outliers based on the number of samples and calculate features from the time-series which are then used together with the pre-processing features into deciding the alert threshold for the table.

Under The Hood

Part of our time-series preprocessing includes classification into several categories such as: ETL / non-ETL updated tables, weekend patterned tables, and uni / multi-modal update patterns.

In order to achieve faster model updating based on the patterns we see, our freshness model is re-trained with new data every 6H as well as performs a state-change detection analysis in order to find if there was a change in the update pattern. If we find such a change all the above described features are calculated only for the last 2 weeks of the training window.

Fine-Tuning the Models

Sensitivity

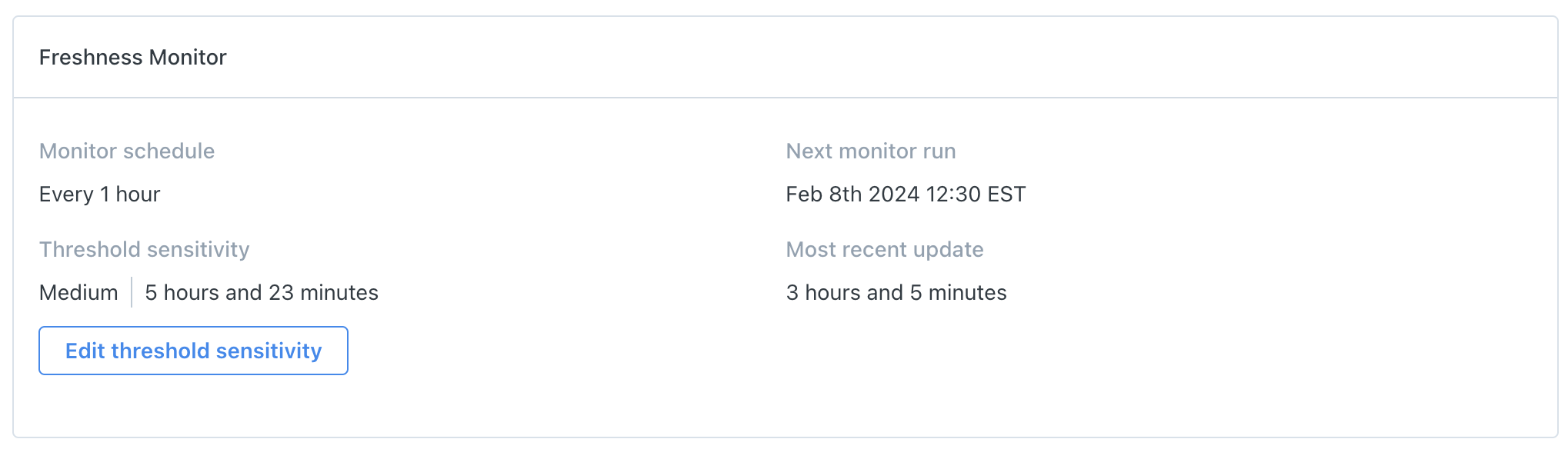

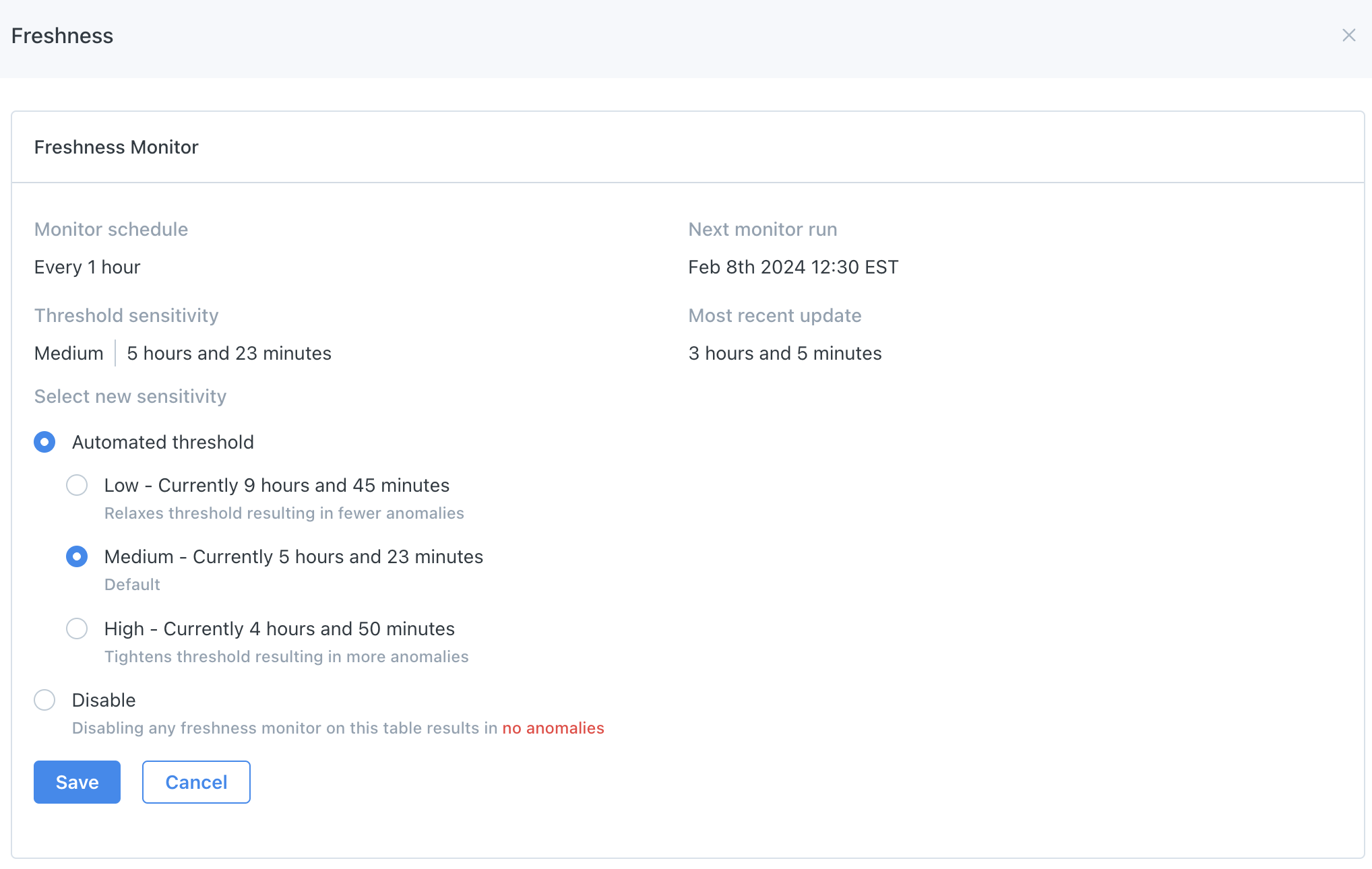

On the Asset page, you can change the model's sensitivity by clicking View Details, followed by the Edit threshold sensitivity button. This change will either increase or decrease the confidence interval. By default, all thresholds are set to Medium sensitivity. Learn more about sensitivity here.

Updated 11 months ago